关于K8s中Ansible AWX(awx-operator 0.30.0)平台Helm部署的一些笔记

写在前面

- 整理一些

K8s中通过Helm的方式部署AWX的笔记分享给小伙伴 - 博文内容为部署过程和遇到问题的解决过程

- 食用方式:

- 需要了解

K8s - 需要预置的

K8s+Helm环境 - 需要

科学上网

- 需要了解

- 理解不足小伙伴帮忙指正

嗯,希望疫情快点结束吧 ^_^

一些介绍

关于 AWX 做简单介绍,AWX 提供基于 Web 的用户界面、REST API 和基于Ansible构建的任务引擎。它是红帽 Ansible 自动化平台的上游项目之一。对应红帽的订阅产品Ansible Tower的开源版本。

在物理机的部署有单机版和单机版+远程数据库,高可用性集群的架构方式,这里部署使用AWX基于k8s的部署方案awx-operator来部署, 为了方便,我们使用Helm的方式,默认配置为单机版,即AWX和PostgreSQL位于同一个节点,对于节点要求内存不小于4G。存储不小于20G。

关于AWX更多了解:项目地址: https://github.com/ansible/awx

需要使用订阅版本 ansible-tower: https://docs.ansible.com/ansible-tower/index.html

要安装 AWX,请查看安装指南。

AWX安装文档:https://github.com/ansible/awx/blob/devel/INSTALL.mdawx-operator安装文档:https://github.com/ansible/awx-operatorhelm 方式安装: https://github.com/ansible/awx-operator/blob/devel/.helm/starter/README.md

关于awx-operator:一个用于Kubernetes的Ansible AWX Operator,使用operator SDK和Ansible构建。关于Operator,这里简单理解为自定义资源CustomResourceDefinition的具体实现来描述AWX的部署过程。下面为AWX部署后生成的自定义资源对象

┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$kubectl get awxs,awxrestores,awxbackupsNAME AGEawx.awx.ansible.com/awx-demo 14h┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$kubectl describe awx awx-demoName: awx-demoNamespace: awxLabels:app.kubernetes.io/component=awxapp.kubernetes.io/managed-by=awx-operatorapp.kubernetes.io/name=awx-demoapp.kubernetes.io/operator-version=0.30.0app.kubernetes.io/part-of=awx-demoAnnotations: <none>API Version: awx.ansible.com/v1beta1Kind: AWXMetadata: Creation Timestamp: 2022-10-15T02:49:58Z Generation: 1 Managed Fields: API Version: awx.ansible.com/v1beta1 .........................关于Helm:可以简单理解为类似Ansible中角色的概念,或者yum,maven,npm等包管理器,用于对需要在Kubernetes上部署的复杂应用进行定义、安装和更新,Helm以Chart的方式对应用软件进行描述,可以方便地创建、版本化、共享和发布复杂的应用软件。

环境要求

需要一个预置的K8s集群,这是使用的是1.22的版本

┌──[root@vms81.liruilongs.github.io]-[~]└─$kubectl get nodesNAME STATUS ROLES AGE VERSIONvms81.liruilongs.github.io Ready control-plane,master 301d v1.22.2vms82.liruilongs.github.io Ready <none> 301d v1.22.2vms83.liruilongs.github.io Ready <none> 301d v1.22.2┌──[root@vms81.liruilongs.github.io]-[~]└─$需要安装好Helm

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$helm versionversion.BuildInfo{Version:"v3.2.1", GitCommit:"fe51cd1e31e6a202cba7dead9552a6d418ded79a", GitTreeState:"clean", GoVersion:"go1.13.10"}work 节点信息

┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$hostnamectl Static hostname: vms81.liruilongs.github.io Icon name: computer-vm Chassis: vm Machine ID: a5d2de32a7d4411d9c12cd390b672d32 Boot ID: 1fd2c0810f6d4058a224d1ff966c0e09 Virtualization: vmware Operating System: CentOS Linux 7 (Core)CPE OS Name: cpe:/o:centos:centos:7 Kernel: Linux 3.10.0-1160.76.1.el7.x86_64 Architecture: x86-64┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$Helm部署

配置awx-operator的Helm源

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$helm repo add awx-operator https://ansible.github.io/awx-operator/"awx-operator" has been added to your repositories┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$helm repo updateHang tight while we grab the latest from your chart repositories......Successfully got an update from the "liruilong_repo" chart repository...Successfully got an update from the "elastic" chart repository...Successfully got an update from the "prometheus-community" chart repository...Successfully got an update from the "azure" chart repository...Unable to get an update from the "ali" chart repository (https://apphub.aliyuncs.com): failed to fetch https://apphub.aliyuncs.com/index.yaml : 504 Gateway Timeout...Successfully got an update from the "awx-operator" chart repository...Successfully got an update from the "stable" chart repositoryUpdate Complete. ⎈ Happy Helming!⎈搜索awx-operator的Chart

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$helm search repo awx-operatorNAMECHART VERSION APP VERSION DESCRIPTIONawx-operator/awx-operator0.30.0 0.30.0 A Helm chart for the AWX Operator自定义参数安装helm install my-awx-operator awx-operator/awx-operator -n awx --create-namespace -f myvalues.yaml

如果使用自定义的安装,需要在myvalues.yaml中开启对应的开关,可以配置HTTPS、独立PG数据库、LB、LDAP认证等。文件模板可以pull下chart包里找到,用里面的value.yaml做模板

我们这里使用默认的配置安装,不需要指定配置文件

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$helm install -n awx --create-namespace my-awx-operator awx-operator/awx-operatorNAME: my-awx-operatorLAST DEPLOYED: Mon Oct 10 16:29:24 2022NAMESPACE: awxSTATUS: deployedREVISION: 1TEST SUITE: NoneNOTES:AWX Operator installed with Helm Chart version 0.30.0┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$OK,这样就安装完成了。但是因为好多镜像需要外网下载,所以需要处理下。为了方便我们切换一下命名空间

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$kubectl config set-context $(kubectl config current-context) --namespace=awxContext "kubernetes-admin@kubernetes" modified.┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$查看下pod状态

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$kubectl get pod -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESawx-operator-controller-manager-79ff9599d8-mksmc 1/2 ErrImagePull 0 13m 10.244.171.167 vms82.liruilongs.github.io <none> <none>┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$kubectl get podNAME READY STATUS RESTARTS AGEawx-operator-controller-manager-79ff9599d8-mksmc 1/2 ImagePullBackOff 0 13m拉取镜像失败,解决报错

┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$kubectl describe pod awx-operator-controller-manager-79ff9599d8-mksmc | grep -i event -A 30Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 14m default-scheduler Successfully assigned awx/awx-operator-controller-manager-79ff9599d8-mksmc to vms82.liruilongs.github.io Normal Pulling 14m kubelet Pulling image "quay.io/ansible/awx-operator:0.30.0" Normal Started 13m kubelet Started container awx-manager Normal Pulled 13m kubelet Successfully pulled image "quay.io/ansible/awx-operator:0.30.0" in 20.52788571s Normal Created 13m kubelet Created container awx-manager Warning Failed 13m (x3 over 14m) kubelet Failed to pull image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0": rpc error: code = Unknown desc = Error response from daemon: Get "https://gcr.io/v2/": net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) Warning Failed 13m (x3 over 14m) kubelet Error: ErrImagePull Warning Failed 12m (x5 over 13m) kubelet Error: ImagePullBackOff Normal Pulling 12m (x4 over 14m) kubelet Pulling image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0" Warning Failed 11m kubelet Failed to pull image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0": rpc error: code = Unknown desc = Error response from daemon: Get "https://gcr.io/v2/": dial tcp 74.125.203.82:443: i/o timeout Normal BackOff 4m23s (x35 over 13m) kubelet Back-off pulling image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0"┌──[root@vms81.liruilongs.github.io]-[~/AWK]└─$Back-off pulling image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0"

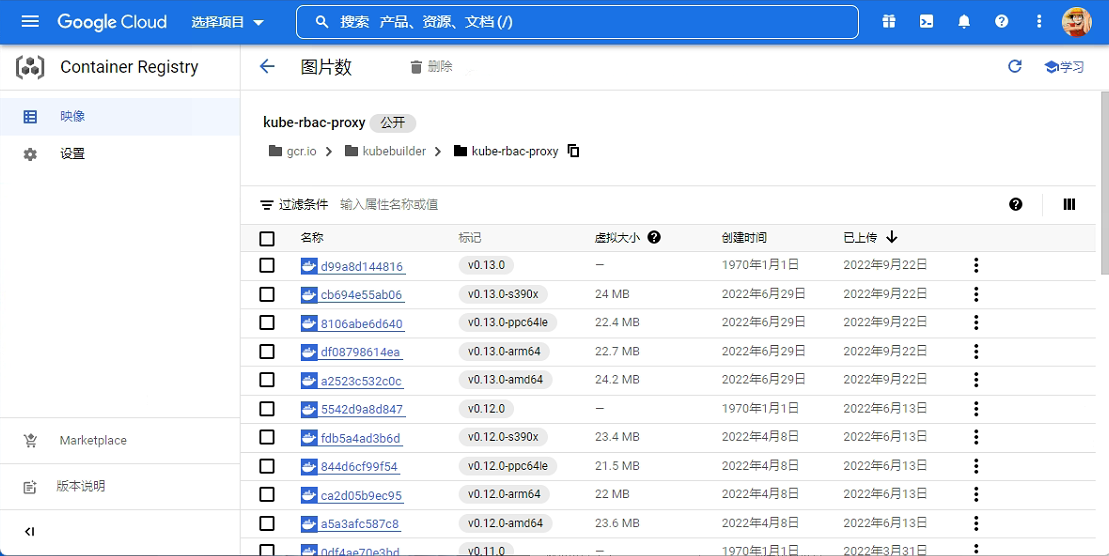

这个镜像需要科学上网,下载下,然后本地导入,如果有谷歌账号,可以在谷歌云下载

| 下载步骤 |

|---|

|

|

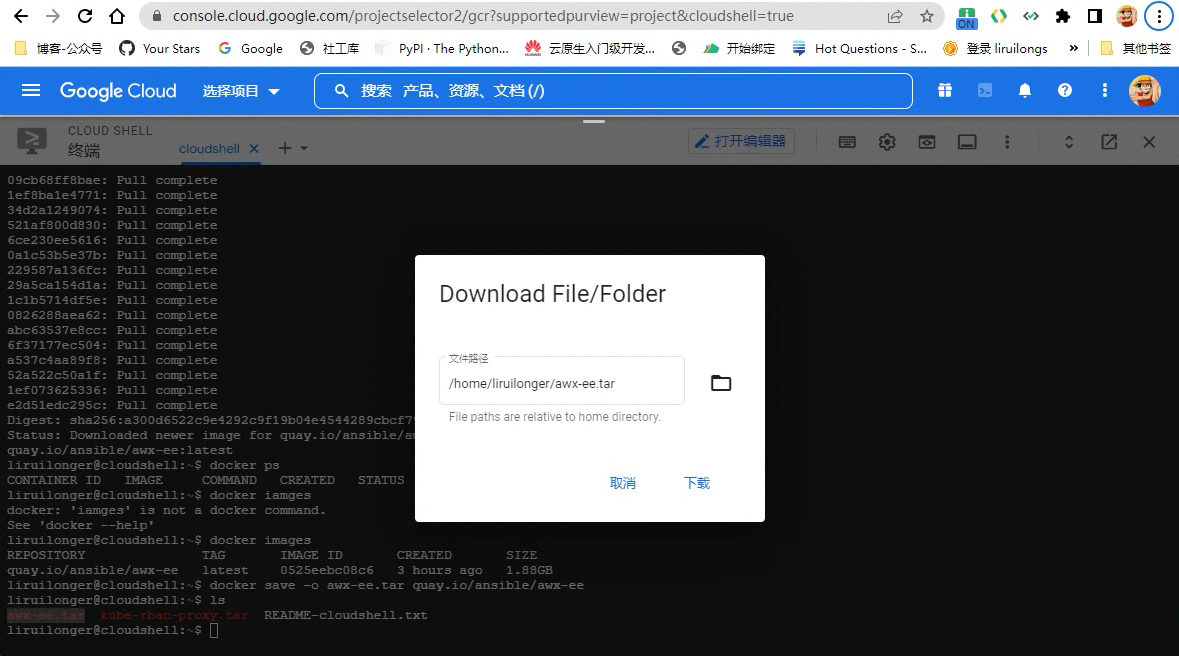

| 点击那个 shell 中运行,然后导出镜像 |

|

| 下载导出的镜像 |

|

上传到虚机

PS C:\Users\山河已无恙\Downloads> scp .\kube-rbac-proxy.tar root@192.168.26.81:~root@192.168.26.81's password:kube-rbac-proxy.tar 100% 58MB 108.7MB/s 00:00PS C:\Users\山河已无恙\Downloads>节点导入镜像

┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$ansible node -m copy -a 'dest=/root/ src=../kube-rbac-proxy.tar'┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$ansible node -m shell -a "docker load -i /root/kube-rbac-proxy.tar"OK,这个POD好了

┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$kubectl get pods -owideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESawx-operator-controller-manager-79ff9599d8-mksmc 2/2 Running 0 19h 10.244.171.167 vms82.liruilongs.github.io <none> <none>┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$查看事件确认下

Events: Type Reason AgeFrom Message ---- ------ ---- ---- ------- Warning Failed 41m (x187 over 19h) kubelet Failed to pull image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0": rpc error: code = Unknown desc = Error response from daemon: Get "https://gcr.io/v2/": net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) Normal Pulling 36m (x214 over 19h) kubelet Pulling image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0" Normal BackOff 6m31s (x4861 over 19h) kubelet Back-off pulling image "gcr.io/kubebuilder/kube-rbac-proxy:v0.13.0" Normal Pulled 88s还有其他资源没有创建好,PG等还没创建,看下POD 中awx的日志排查下问题

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl logs awx-operator-controller-manager-79ff9599d8-mksmc -c awx-manager剧本执行报错,unable to retrieve the complete list of server APIs

--------------------------- Ansible Task StdOut -------------------------------TASK [Verify imagePullSecrets] *************************************************task path: /opt/ansible/playbooks/awx.yml: 10-------------------------------------------------------------------------------I1015 11: 09: 32.7726238 request.go: 601] Waited for 1.048239742s due to client-side throttling, not priority and fairness, request: GET:https: //10.96.0.1:443/apis/autoscaling/v2beta2?timeout=32s{"level": "error","ts": 1665832173.374363,"logger": "proxy","msg": "Unable to determine if virtual resource","gvk": "/v1, Kind=Secret","error": "unable to retrieve the complete list of server APIs: metrics.k8s.io/v1beta1: an error on the server (\"Internal Server Error: \\\"/apis/metrics.k8s.io/v1beta1?timeout=32s\\\": the server could not find the requested resource\") has prevented the request from succeeding","stacktrace": "github.com/operator-framework/operator-sdk/internal/ansible/proxy.(*cacheResponseHandler).ServeHTTP\n\t/workspace/internal/ansible/proxy/cache_response.go:97\nnet/http.serverHandler.ServeHTTP\n\t/usr/local/go/src/net/http/server.go:2916\nnet/http.(*conn).serve\n\t/usr/local/go/src/net/http/server.go:1966"}关于这个问题在下面的issuse中找到了解决办法:

- https://github.com/kiali/kiali/issues/3239

- https://github.com/helm/helm/issues/6361#issuecomment-538220109

具体操作可以参考:https://www.cnblogs.com/liruilong/p/16795064.html

解决问题之后我们需要重新helm repo update然后重新部署,这一步可以略去, 我的网不好所以需要

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$helm repo updateHang tight while we grab the latest from your chart repositories......Successfully got an update from the "liruilong_repo" chart repository...Successfully got an update from the "elastic" chart repository...Successfully got an update from the "prometheus-community" chart repository...Successfully got an update from the "azure" chart repository...Unable to get an update from the "ali" chart repository (https://apphub.aliyuncs.com): failed to fetch https://apphub.aliyuncs.com/index.yaml : 504 Gateway Timeout...Successfully got an update from the "awx-operator" chart repository...Successfully got an update from the "stable" chart repositoryUpdate Complete. ⎈ Happy Helming!⎈因为之前已经install了,所以这里upgrade就可以

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$helm upgrade my-awx-operator awx-operator/awx-operator -n awx --create-namespaceRelease "my-awx-operator" has been upgraded. Happy Helming!NAME: my-awx-operatorLAST DEPLOYED: Sat Oct 15 21:16:28 2022NAMESPACE: awxSTATUS: deployedREVISION: 3TEST SUITE: NoneNOTES:AWX Operator installed with Helm Chart version 0.30.0在看下日志确认下,没有error即可

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl logs awx-operator-controller-manager-79ff9599d8-2v5fn -c awx-manager在看下POD状态

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get podsNAME READY STATUS RESTARTS AGEawx-demo-postgres-13-0 0/1 Pending 0 105sawx-operator-controller-manager-79ff9599d8-2v5fn 2/2 Running 0 128m┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get svcNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEawx-demo-postgres-13 ClusterIP None <none> 5432/TCP 5m48sawx-operator-controller-manager-metrics-service ClusterIP 10.107.17.167 <none> 8443/TCP 132mpg对应的pod:awx-demo-postgres-13-0 pending了,看下事件

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl describe pods awx-demo-postgres-13-0 | grep -i -A 10 eventEvents: Type Reason Age From Message ---- ------ ---- ---- ------- Warning FailedScheduling 23s (x8 over 7m31s) default-scheduler 0/3 nodes are available: 3 pod has unbound immediate PersistentVolumeClaims.┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get pvcNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEpostgres-13-awx-demo-postgres-13-0 Pending 10m┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl describe pvc postgres-13-awx-demo-postgres-13-0 | grep -i -A 10 eventEvents: Type Reason Age From Message ---- ------ ---- ---- ------- Normal FailedBinding 82s (x42 over 11m) persistentvolume-controller no persistent volumes available for this claim and no storage class is set┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get scNo resources foundOK ,Pending的原因是没有默认SC

对于有状态应用来讲,在生成statefulsets之前需要创建好默认的SC(动态卷供应),由SC来动态处理PV和PVC的创建,生成PV用于PG的数据存储,所以我们这里需要创建一个SC,创建之前我们需要一个分配器,不同的分配器指定了动态创建pv时使用什么后端存储。

这里为了方便,使用本地存储作为后端存储,一般情况下,PV只能是网络存储,不属于任何Node,所以通过NFS的方式比较多一点,SC会通过provisioner 字段指定分配器。创建好storageClass之后,用户在定义pvc时使用默认SC的分配存储

分配器及SC的创建: https://github.com/rancher/local-path-provisioner

yaml 文件下载不下来,所以浏览器访问然后复制下执行,我这里集群本来没有SC,如果小伙伴的集群有SC,直接设置默认SC即可

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl apply -f https://raw.githubusercontent.com/rancher/local-path-provisioner/v0.0.22/deploy/local-path-storage.yamlThe connection to the server raw.githubusercontent.com was refused - did you specify the right host or port?┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$wget https://raw.githubusercontent.com/rancher/local-path-provisioner/v0.0.22/deploy/local-path-storage.yaml--2022-10-15 21:45:02-- https://raw.githubusercontent.com/rancher/local-path-provisioner/v0.0.22/deploy/local-path-storage.yaml正在解析主机 raw.githubusercontent.com (raw.githubusercontent.com)... 0.0.0.0, ::正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|0.0.0.0|:443... 失败:拒绝连接。正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|::|:443... 失败:拒绝连接。┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$vim local-path-storage.yaml┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$ [新] 128L, 2932C 已写入┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get sc -ANo resources found┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$mkdir -p /opt/local-path-provisioner┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl apply -f local-path-storage.yamlnamespace/local-path-storage createdserviceaccount/local-path-provisioner-service-account createdclusterrole.rbac.authorization.k8s.io/local-path-provisioner-role createdclusterrolebinding.rbac.authorization.k8s.io/local-path-provisioner-bind createddeployment.apps/local-path-provisioner createdstorageclass.storage.k8s.io/local-path createdconfigmap/local-path-config created┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$确认创建成功

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get scNAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGElocal-path rancher.io/local-path Delete WaitForFirstConsumer false 2m6s设置为默认SC:https://kubernetes.io/zh-cn/docs/tasks/administer-cluster/change-default-storage-class/

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl patch storageclass local-path -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'storageclass.storage.k8s.io/local-path patched┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get podsNAME READY STATUS RESTARTS AGEawx-demo-postgres-13-0 0/1 Pending 0 46mawx-operator-controller-manager-79ff9599d8-2v5fn 2/2 Running 0 173m导出yaml文件,删除重新创建

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get pvc postgres-13-awx-demo-postgres-13-0 -o yaml > postgres-13-awx-demo-postgres-13-0.yaml┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl delete -f postgres-13-awx-demo-postgres-13-0.yamlpersistentvolumeclaim "postgres-13-awx-demo-postgres-13-0" deleted┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl apply -f postgres-13-awx-demo-postgres-13-0.yamlpersistentvolumeclaim/postgres-13-awx-demo-postgres-13-0 created查看pvc的状态,这里需要等一会,Bound意味着已经绑定。

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get pvcNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEpostgres-13-awx-demo-postgres-13-0 Pending local-path 3s┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl describe pvc postgres-13-awx-demo-postgres-13-0 | grep -i -A 10 eventEvents: Type Reason Age From Message ---- ------ ---- ---- ------- Normal WaitForPodScheduled 42s persistentvolume-controller waiting for pod awx-demo-postgres-13-0 to be scheduled Normal ExternalProvisioning 41s persistentvolume-controller waiting for a volume to be created, either by external provisioner "rancher.io/local-path" or manually created by system administrator Normal Provisioning 41s rancher.io/local-path_local-path-provisioner-7c795b5576-gmrx4_d69ca393-bcbe-4abb-8b22-cd8db3b26bf8 External provisioner is provisioning volume for claim "awx/postgres-13-awx-demo-postgres-13-0" Normal ProvisioningSucceeded 39s rancher.io/local-path_local-path-provisioner-7c795b5576-gmrx4_d69ca393-bcbe-4abb-8b22-cd8db3b26bf8 Successfully provisioned volume pvc-44b7687c-de18-45d2-bef6-8fb2d1c415d3┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get pvcNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEpostgres-13-awx-demo-postgres-13-0 Bound pvc-44b7687c-de18-45d2-bef6-8fb2d1c415d3 8Gi RWO local-path 53s┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$kubectl get pvNAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpvc-44b7687c-de18-45d2-bef6-8fb2d1c415d3 8Gi RWO Delete Bound awx/postgres-13-awx-demo-postgres-13-0 local-path54s在看下POD的状态,这里PG相关的POD创建成功,但是awx-demo-65d9bf775b-hc58x对应的初始化容器一个也没有创建成功,应该是镜像pull不下来。

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl get pods -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESawx-demo-65d9bf775b-hc58x 0/4 Init:0/1 0 4m42s <none> vms82.liruilongs.github.io <none> <none>awx-demo-postgres-13-0 1/1 Running 0 68m 10.244.171.180 vms82.liruilongs.github.io <none> <none>awx-operator-controller-manager-79ff9599d8-m7t8k 2/2 Running 0 7m3s 10.244.171.178 vms82.liruilongs.github.io <none> <none>┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$kubectl describe pod awx-demo-65d9bf775b-hc58x | grep -i -A 10 eventEvents: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 4m47s default-scheduler Successfully assigned awx/awx-demo-65d9bf775b-hc58x to vms82.liruilongs.github.io Normal Pulling 4m46s kubelet Pulling image "quay.io/ansible/awx-ee:latest"OK,然后我们以同样的方式pull镜像

┌──[root@vms81.liruilongs.github.io]-[~/awx/awx-operator]└─$cd /root/ansible/┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$ansible node -m copy -a 'dest=/root/ src=../awx-ee.tar'┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$ansible node -m shell -a "docker load -i /root/awx-ee.tar"查看下其他的镜像

┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$kubectl describe pods awx-demo-65d9bf775b-hc58x | grep -i image: Image: quay.io/ansible/awx-ee:latest Image: docker.io/redis:7 Image: quay.io/ansible/awx:21.7.0 Image: quay.io/ansible/awx:21.7.0 Image: quay.io/ansible/awx-ee:latest┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$可以手动在work节点pull镜像,确认镜像都pull成功

┌──[root@vms82.liruilongs.github.io]-[~]└─$docker pull quay.io/ansible/awx:21.7.021.7.0: Pulling from ansible/awxDigest: sha256:bca920f96fc6a77b72c4442088b53a90b22162cfa90503d3dcda4577afee58f8Status: Image is up to date for quay.io/ansible/awx:21.7.0quay.io/ansible/awx:21.7.0┌──[root@vms82.liruilongs.github.io]-[~]└─$docker pull docker.io/redis:77: Pulling from library/redisDigest: sha256:c95835a74c37b3a784fb55f7b2c211bd20c650d5e55dae422c3caa9c01eb39faStatus: Image is up to date for redis:7docker.io/library/redis:7┌──[root@vms82.liruilongs.github.io]-[~]└─$docker pull quay.io/ansible/awx-ee:latestlatest: Pulling from ansible/awx-eeDigest: sha256:a300d6522c9e4292c9f19b04e4544289cbcf7926bde4001131582f254d191494Status: Image is up to date for quay.io/ansible/awx-ee:latestquay.io/ansible/awx-ee:latest┌──[root@vms82.liruilongs.github.io]-[~]└─$这里需要等一会,会看到Pod都正常了

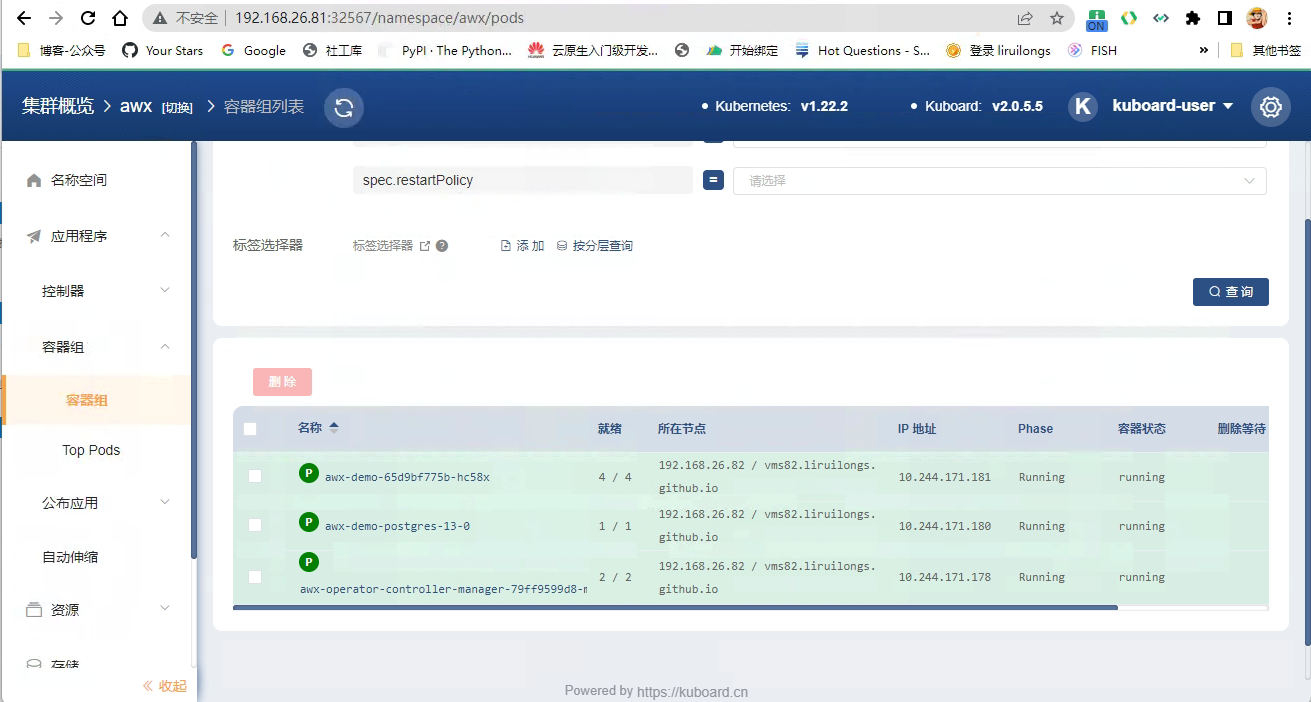

┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$kubectl get podsNAME READY STATUS RESTARTS AGEawx-demo-65d9bf775b-hc58x 4/4 Running 0 79mawx-demo-postgres-13-0 1/1 Running 0 143mawx-operator-controller-manager-79ff9599d8-m7t8k 2/2 Running 0 81m查看SVC访问测试

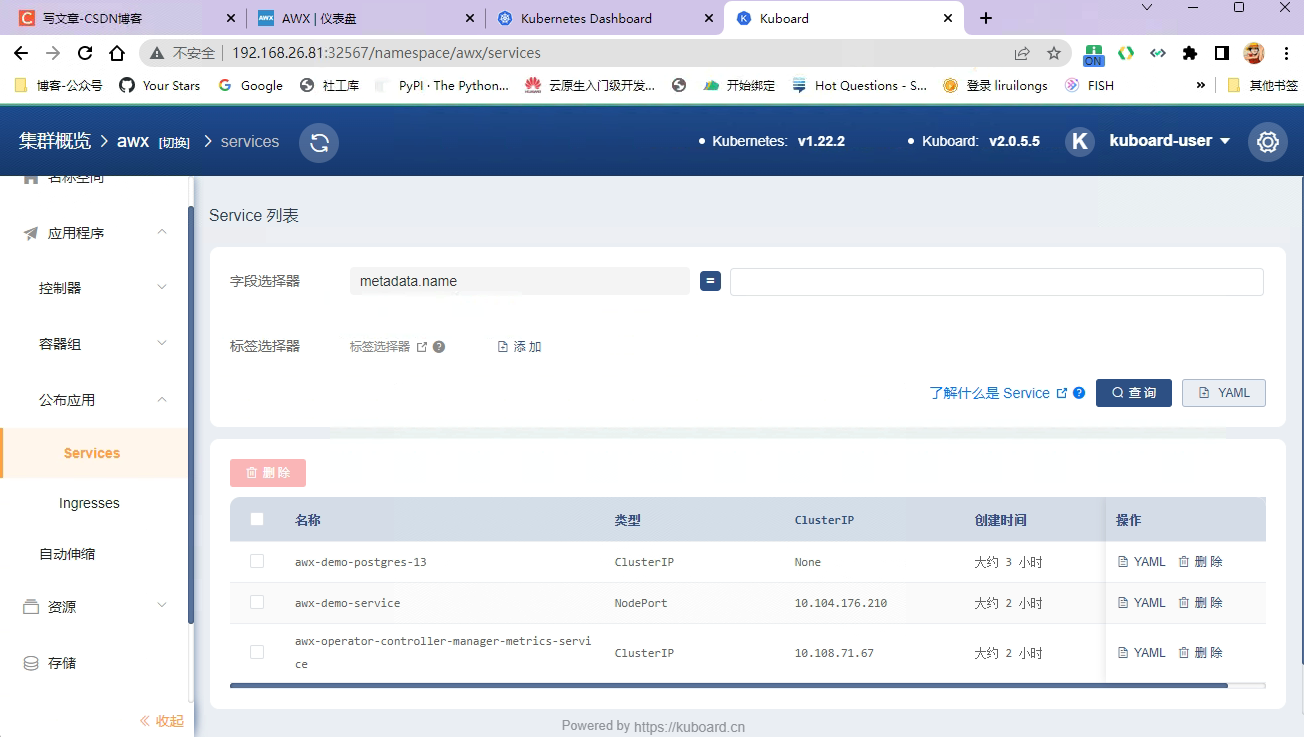

┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$kubectl get svcNAME TYPE CLUSTER-IPEXTERNAL-IP PORT(S) AGEawx-demo-postgres-13 ClusterIP None <none> 5432/TCP143mawx-demo-service NodePort 10.104.176.210 <none> 80:30066/TCP 79mawx-operator-controller-manager-metrics-service ClusterIP 10.108.71.67 <none> 8443/TCP82m┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$curl 192.168.26.82:30066<!doctype html><html lang="en"><head><script nonce="cw6jhvbF7S5bfKJPsimyabathhaX35F5hIyR7emZNT0=" type="text/javascript">window.....┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$获取密码

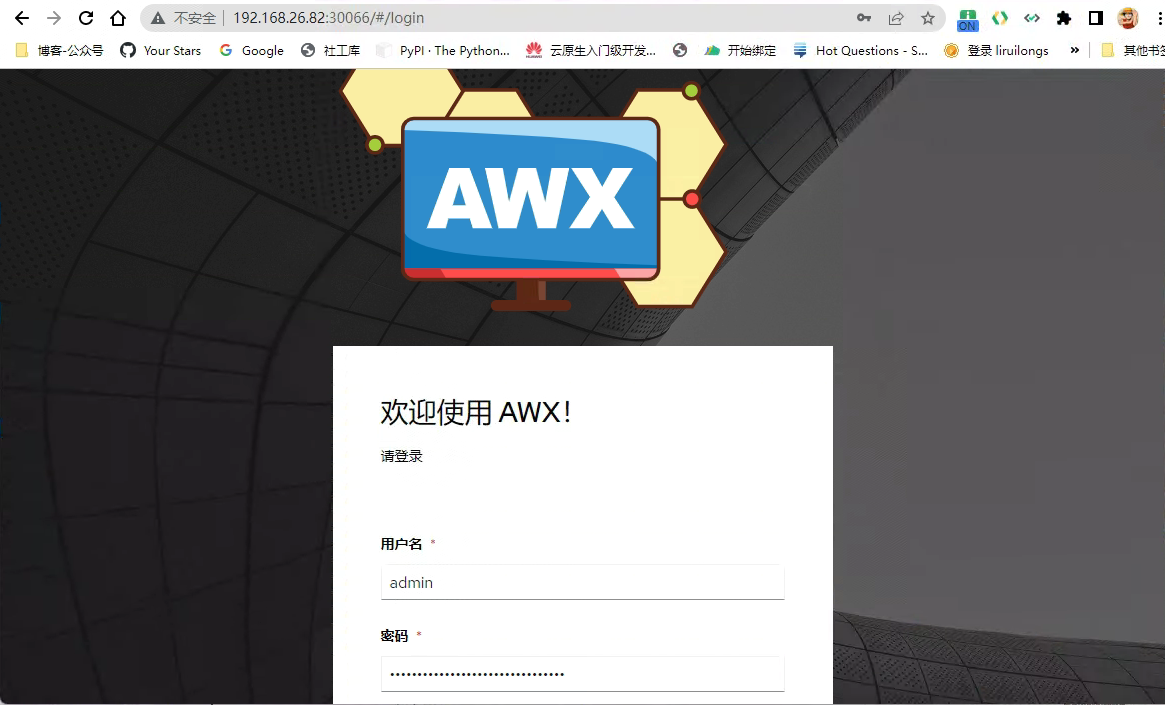

┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$kubectl get secretsNAMETYPE DATA AGEawx-demo-admin-password Opaque 1 146mawx-demo-app-credentials Opaque 3 82mawx-demo-broadcast-websocket Opaque 1 146mawx-demo-postgres-configuration Opaque 6 146mawx-demo-receptor-ca kubernetes.io/tls2 82mawx-demo-receptor-work-signing Opaque 2 82mawx-demo-secret-key Opaque 1 146mawx-demo-token-sc92t kubernetes.io/service-account-token 3 82mawx-operator-controller-manager-token-tpv2m kubernetes.io/service-account-token 3 84mdefault-token-864fk kubernetes.io/service-account-token 3 4h32mredhat-operators-pull-secret Opaque 1 146msh.helm.release.v1.my-awx-operator.v1 helm.sh/release.v1 1 84m┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$echo $(kubectl get secret awx-demo-admin-password -o jsonpath="{.data.password}" | base64 --decode)tP59YoIWSS6NgCUJYQUG4cXXJIaIc7ci┌──[root@vms81.liruilongs.github.io]-[~/awx-operator/crds]└─$访问测试

默认的服务发布方式为NodePort,所以我们可以在任意子网IP通过节点加端口访问:http://192.168.26.82:30066/#/login

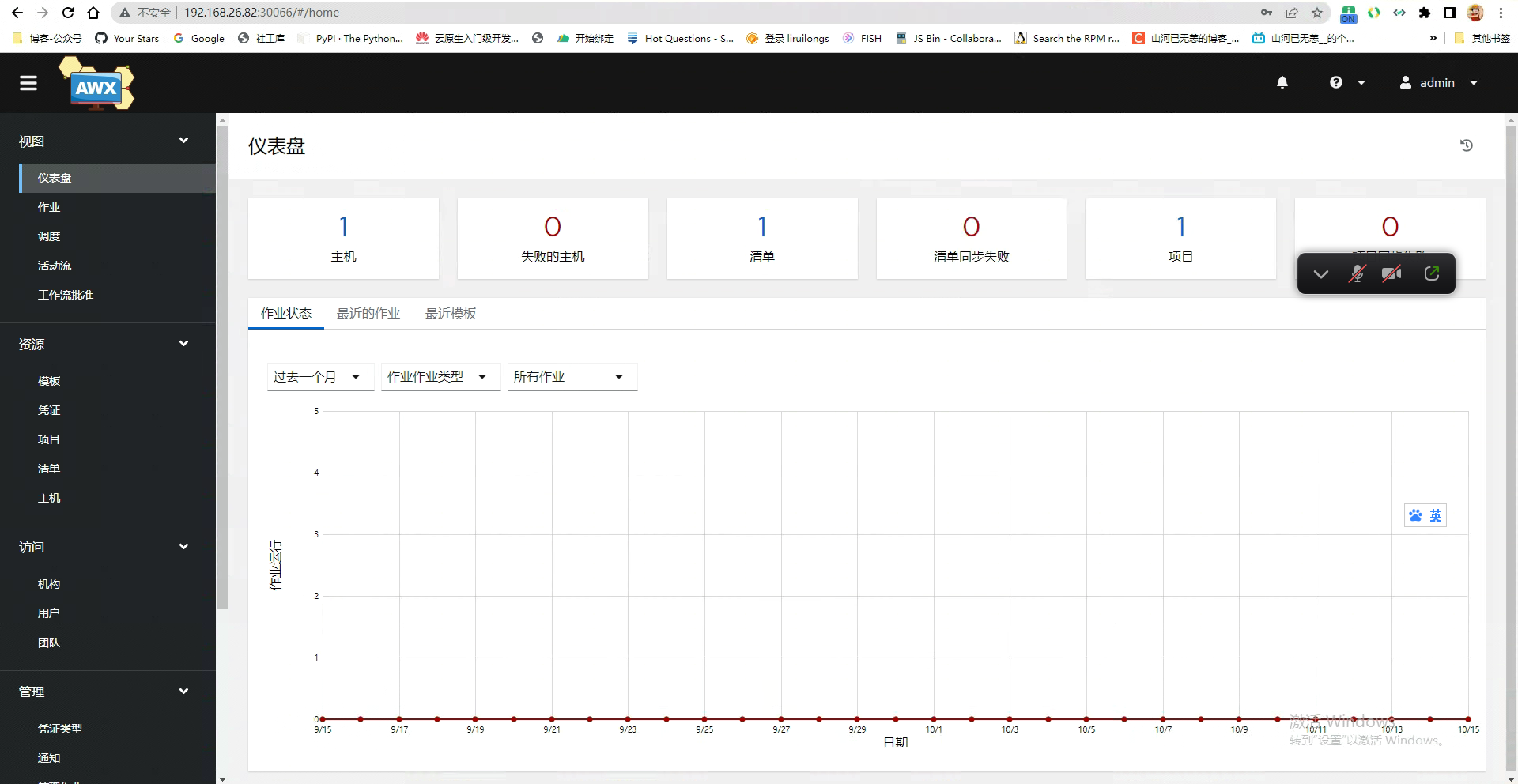

没有想到会是中文的界面,只能说国际化做的很好…

如果有面板工具可以简单看下涉及的资源

| 部分资源 |

|---|

|

|

通过命令行查看所有资源

┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$kubectl api-resources -o name --verbs=list --namespaced | xargs -n 1 kubectl get --show-kind --ignore-not-found -n awxNAME DATA AGEconfigmap/awx-demo-awx-configmap 5 116mconfigmap/awx-operator 0 5h7mconfigmap/awx-operator-awx-manager-config 1 119mconfigmap/kube-root-ca.crt 1 5h7mNAMEENDPOINTS AGEendpoints/awx-demo-postgres-13 10.244.171.180:5432 3hendpoints/awx-demo-service 10.244.171.181:8052 116mendpoints/awx-operator-controller-manager-metrics-service 10.244.171.178:8443 119mLAST SEEN TYPE REASON OBJECT MESSAGE40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Successfully pulled image "quay.io/ansible/awx-ee:latest" in 1h16m36.915786211s40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container init40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container init40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "docker.io/redis:7" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container redis40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container redis40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "quay.io/ansible/awx:21.7.0" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container awx-demo-web40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container awx-demo-web40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "quay.io/ansible/awx:21.7.0" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container awx-demo-task40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container awx-demo-task40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "quay.io/ansible/awx-ee:latest" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container awx-demo-ee40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container awx-demo-eeNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEpersistentvolumeclaim/postgres-13-awx-demo-postgres-13-0 Bound pvc-44b7687c-de18-45d2-bef6-8fb2d1c415d3 8Gi RWO local-path 117mNAME READY STATUS RESTARTS AGEpod/awx-demo-65d9bf775b-hc58x 4/4 Running 0 116mpod/awx-demo-postgres-13-0 1/1 Running 0 3hpod/awx-operator-controller-manager-79ff9599d8-m7t8k 2/2 Running 0 119mNAMETYPE DATA AGEsecret/awx-demo-admin-password Opaque 1 3hsecret/awx-demo-app-credentials Opaque 3 116msecret/awx-demo-broadcast-websocket Opaque 1 3hsecret/awx-demo-postgres-configuration Opaque 6 3hsecret/awx-demo-receptor-ca kubernetes.io/tls2 116msecret/awx-demo-receptor-work-signing Opaque 2 116msecret/awx-demo-secret-key Opaque 1 3hsecret/awx-demo-token-sc92t kubernetes.io/service-account-token 3 116msecret/awx-operator-controller-manager-token-tpv2m kubernetes.io/service-account-token 3 119msecret/default-token-864fk kubernetes.io/service-account-token 3 5h7msecret/redhat-operators-pull-secret Opaque 1 3hsecret/sh.helm.release.v1.my-awx-operator.v1 helm.sh/release.v1 1 119mNAME SECRETS AGEserviceaccount/awx-demo 1 116mserviceaccount/awx-operator-controller-manager 1 119mserviceaccount/default 1 5h7mNAME TYPE CLUSTER-IPEXTERNAL-IP PORT(S) AGEservice/awx-demo-postgres-13 ClusterIP None <none> 5432/TCP3hservice/awx-demo-service NodePort 10.104.176.210 <none> 80:30066/TCP 116mservice/awx-operator-controller-manager-metrics-service ClusterIP 10.108.71.67 <none> 8443/TCP119mNAME CONTROLLER REVISION AGEcontrollerrevision.apps/awx-demo-postgres-13-85958bcbcd statefulset.apps/awx-demo-postgres-13 1 3hNAME READY UP-TO-DATE AVAILABLE AGEdeployment.apps/awx-demo 1/1 1 1 116mdeployment.apps/awx-operator-controller-manager 1/1 1 1 119mNAME DESIRED CURRENT READY AGEreplicaset.apps/awx-demo-65d9bf775b 1 1 1116mreplicaset.apps/awx-operator-controller-manager-79ff9599d8 1 1 1119mNAME READY AGEstatefulset.apps/awx-demo-postgres-13 1/1 3hNAME AGEawx.awx.ansible.com/awx-demo 13hNAME HOLDER AGElease.coordination.k8s.io/awx-operator awx-operator-controller-manager-79ff9599d8-m7t8k_7502aa73-eaad-4b61-868e-4af77edaf856 5d7hNAME ADDRESSTYPE PORTS ENDPOINTS AGEendpointslice.discovery.k8s.io/awx-demo-postgres-13-4tc87 IPv4 5432 10.244.171.180 3hendpointslice.discovery.k8s.io/awx-demo-service-6gs4d IPv4 8052 10.244.171.181 116mendpointslice.discovery.k8s.io/awx-operator-controller-manager-metrics-service-7wtml IPv4 8443 10.244.171.178 119mLAST SEEN TYPE REASON OBJECT MESSAGE40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Successfully pulled image "quay.io/ansible/awx-ee:latest" in 1h16m36.915786211s40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container init40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container init40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "docker.io/redis:7" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container redis40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container redis40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "quay.io/ansible/awx:21.7.0" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container awx-demo-web40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container awx-demo-web40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "quay.io/ansible/awx:21.7.0" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container awx-demo-task40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container awx-demo-task40m Normal Pulled pod/awx-demo-65d9bf775b-hc58x Container image "quay.io/ansible/awx-ee:latest" already present on machine40m Normal Created pod/awx-demo-65d9bf775b-hc58x Created container awx-demo-ee40m Normal Started pod/awx-demo-65d9bf775b-hc58x Started container awx-demo-eeNAMEROLE AGErolebinding.rbac.authorization.k8s.io/awx-demoRole/awx-demo116mrolebinding.rbac.authorization.k8s.io/awx-operator-awx-manager-rolebindingRole/awx-operator-awx-manager-role119mrolebinding.rbac.authorization.k8s.io/awx-operator-leader-election-rolebinding Role/awx-operator-leader-election-role 119mNAMECREATED ATrole.rbac.authorization.k8s.io/awx-demo2022-10-15T14:19:31Zrole.rbac.authorization.k8s.io/awx-operator-awx-manager-role2022-10-15T14:17:13Zrole.rbac.authorization.k8s.io/awx-operator-leader-election-role 2022-10-15T14:17:13Z┌──[root@vms81.liruilongs.github.io]-[~/ansible]└─$嗯,关于Helm方式安装AWX和小伙伴们分享到这里,生活加油 ^_^

博文参考

https://blog.csdn.net/m0_51691302/article/details/126288338

https://zenn.dev/asterisk9101/articles/kubernetes-1

另:kube-rbac-proxy:v0.13.0 镜像以上传到了CSDN(0积分)有需要小伙伴可以下载:

https://download.csdn.net/download/sanhewuyang/86765668