k8s之实现redis一主多从动态扩缩容

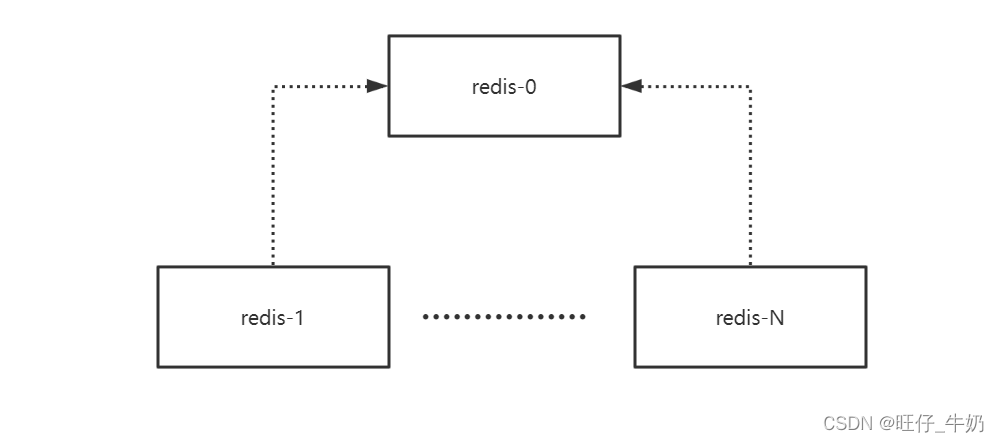

架构

基于statefulset实现一主多从redis,动态扩缩容redis从库,并基于storageclass实现动态存储,保证数据的持久化存储

| 主机名 | IP |

|---|---|

| k8s-master-1 | 192.168.0.10 |

| k8s-node-1 | 192.168.0.11 |

| k8s-nfs | 192.168.0.55 |

nfs-provider

# k8s-nfs nfs配置信息[root@k8s-nfs ~]# cat /etc/exports/data192.168.0.0/24(rw,all_squash)[root@k8s-master-1 1master-Nslave]# cat nfs-provider.yaml apiVersion: v1kind: ServiceAccountmetadata: name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: redis---kind: ClusterRoleapiVersion: rbac.authorization.k8s.io/v1metadata: name: nfs-client-provisioner-runnerrules: - apiGroups: [""] resources: ["nodes"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["persistentvolumes"] verbs: ["get", "list", "watch", "create", "delete"] - apiGroups: [""] resources: ["persistentvolumeclaims"] verbs: ["get", "list", "watch", "update"] - apiGroups: ["storage.k8s.io"] resources: ["storageclasses"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["events"] verbs: ["create", "update", "patch"]---kind: ClusterRoleBindingapiVersion: rbac.authorization.k8s.io/v1metadata: name: run-nfs-client-provisionersubjects: - kind: ServiceAccount name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: redisroleRef: kind: ClusterRole name: nfs-client-provisioner-runner apiGroup: rbac.authorization.k8s.io---kind: RoleapiVersion: rbac.authorization.k8s.io/v1metadata: name: leader-locking-nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: redisrules: - apiGroups: [""] resources: ["endpoints"] verbs: ["get", "list", "watch", "create", "update", "patch"]---kind: RoleBindingapiVersion: rbac.authorization.k8s.io/v1metadata: name: leader-locking-nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: redissubjects: - kind: ServiceAccount name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: redisroleRef: kind: Role name: leader-locking-nfs-client-provisioner apiGroup: rbac.authorization.k8s.io---apiVersion: apps/v1kind: Deploymentmetadata: name: nfs-client-provisioner labels: app: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: redisspec: replicas: 1 strategy: type: Recreate selector: matchLabels: app: nfs-client-provisioner template: metadata: labels: app: nfs-client-provisioner spec: nodeName: k8s-master-1 #设置在master节点运行 tolerations: #设置容忍master节点污点 - key: node-role.kubernetes.io/master operator: Equal value: "true" serviceAccountName: nfs-client-provisioner containers: - name: nfs-client-provisioner image: registry.cn-hangzhou.aliyuncs.com/jiayu-kubernetes/nfs-subdir-external-provisioner:v4.0.0 imagePullPolicy: IfNotPresent volumeMounts: - name: nfs-client-rootmountPath: /persistentvolumes env: - name: PROVISIONER_NAMEvalue: k8s/nfs-subdir-external-provisioner - name: NFS_SERVERvalue: 192.168.0.55 - name: NFS_PATHvalue: /data volumes: - name: nfs-client-root nfs: server: 192.168.0.55 # NFS SERVER_IP path: /data---apiVersion: storage.k8s.io/v1kind: StorageClassmetadata: name: redis-nfs namespace: redis annotations: storageclass.kubernetes.io/is-default-class: "false" # 是否设置为默认的storageclassprovisioner: k8s/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'allowVolumeExpansion: truereclaimPolicy: Deleteparameters: archiveOnDelete: "false" redis.conf

[root@k8s-master-1 1master-Nslave]# cat configmap.yaml apiVersion: v1kind: Namespacemetadata: name: redis---apiVersion: v1kind: ConfigMapmetadata: name: redis namespace: redisdata: redis.conf: |- daemonize no protected-mode no bind 0.0.0.0 port 6379 timeout 300 databases 16 save 900 1 save 300 10 save 60 10000 loglevel notice logfile /data/redis.log # RDB ## dir /data stop-writes-on-bgsave-error yes rdbcompression yes dbfilename dump.rdb REPL# slave-read-only yes repl-diskless-sync no repl-timeout 120 SECURITY # requirepass 123456 masterauth 123456 AOF ## appendonly no appendfilename "appendonly.aof" appendfsync nostatefulset

[root@k8s-master-1 1master-Nslave]# cat statefulset.yaml apiVersion: v1kind: Servicemetadata: name: redis namespace: redisspec: selector: app: redis ports: - name: redis port: 6379 targetPort: 6379 protocol: TCP clusterIP: None type: ClusterIP---apiVersion: apps/v1kind: StatefulSetmetadata: name: redis namespace: redisspec: replicas: 2 # 默认是一主一从 selector: matchLabels: app: redis serviceName: redis template: metadata: labels: app: redis spec: containers: - name: redis image: redis:5 imagePullPolicy: IfNotPresent command: - /bin/sh - -c - | set -e hostname=$(echo `hostname` | grep -oE '\-([0-9]+)$') if [ "$hostname" != "-0" ]; then #如果不是第一个节点,则向第一个节点同步 redis-server /etc/redis.conf slaveof redis-0.redis.redis.svc.cluster.local 6379 else redis-server /etc/redis.conf fi volumeMounts: - name: redis-data mountPath: /data - name: redis-conf mountPath: /etc/redis.conf subPath: redis.conf volumes: - name: redis-conf configMap: name: redis volumeClaimTemplates: - metadata: name: redis-data namespace: redis spec: accessModes: ["ReadWriteMany"] storageClassName: redis-nfs resources: requests: storage: 500Mi部署

# 部署configmap[root@k8s-master-1 1master-Nslave]# kubectl apply -f configmap.yaml namespace/redis createdconfigmap/redis created# 部署nfs-provider[root@k8s-master-1 1master-Nslave]# kubectl apply -f nfs-provider.yaml serviceaccount/nfs-client-provisioner createdclusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner createdclusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner createdrole.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner createdrolebinding.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner createddeployment.apps/nfs-client-provisioner createdstorageclass.storage.k8s.io/redis-nfs created# 部署statefulset[root@k8s-master-1 1master-Nslave]# kubectl apply -f statefulset.yaml service/redis createdstatefulset.apps/redis created# 查看pod[root@k8s-master-1 1master-Nslave]# kubectl get pods -n redisNAME READY STATUS RESTARTS AGEnfs-client-provisioner-7f6758d967-l9b5p 1/1 Running 0 2m10sredis-01/1 Running 0 100sredis-11/1 Running 0 53s# 查看PV,PVC[root@k8s-master-1 1master-Nslave]# kubectl get pvc -n redisNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEredis-data-redis-0 Bound pvc-e0642424-f6b3-405f-9d12-d53b277be042 500Mi RWX redis-nfs 2m21sredis-data-redis-1 Bound pvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6 500Mi RWX redis-nfs 94s[root@k8s-master-1 1master-Nslave]# kubectl get pvNAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6 500Mi RWX Delete Bound redis/redis-data-redis-1 redis-nfs 96spvc-e0642424-f6b3-405f-9d12-d53b277be042 500Mi RWX Delete Bound redis/redis-data-redis-0 redis-nfs 2m23s# k8s-nfs节点查看[root@k8s-nfs ~]# ls /dataredis-redis-data-redis-0-pvc-e0642424-f6b3-405f-9d12-d53b277be042 redis-redis-data-redis-1-pvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6# 查看redis-1复制情况[root@k8s-master-1 1master-Nslave]# kubectl exec redis-1 -n redis -- redis-cli -a 123456 info replication# Replicationrole:slave #从库master_host:redis-0.redis.redis.svc.cluster.local #主库信息master_port:6379master_link_status:upmaster_last_io_seconds_ago:7master_sync_in_progress:0slave_repl_offset:252slave_priority:100slave_read_only:1connected_slaves:0master_replid:03c3dd18042139cbe116946f6c46c640fb91681bmaster_replid2:0000000000000000000000000000000000000000master_repl_offset:252second_repl_offset:-1repl_backlog_active:1repl_backlog_size:1048576repl_backlog_first_byte_offset:1repl_backlog_histlen:252扩容

扩容从redis-0->redis-N

# 扩容[root@k8s-master-1 1master-Nslave]# kubectl scale statefulset redis --replicas=4 -n redisstatefulset.apps/redis scaled# 查看pod[root@k8s-master-1 1master-Nslave]# kubectl get pods -n redisNAME READY STATUS RESTARTS AGEnfs-client-provisioner-7f6758d967-l9b5p 1/1 Running 0 6m52sredis-01/1 Running 0 6m22sredis-11/1 Running 0 5m35sredis-21/1 Running 0 16sredis-31/1 Running 0 11s# 可见pvc,pv都是动态生成的[root@k8s-master-1 1master-Nslave]# kubectl get pvc -n redisNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEredis-data-redis-0 Bound pvc-e0642424-f6b3-405f-9d12-d53b277be042 500Mi RWX redis-nfs 7mredis-data-redis-1 Bound pvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6 500Mi RWX redis-nfs 6m13sredis-data-redis-2 Bound pvc-521d77ec-64df-44e1-8a49-22d032046b86 500Mi RWX redis-nfs 54sredis-data-redis-3 Bound pvc-365be101-2ced-4800-9db0-be68fdedd64d 500Mi RWX redis-nfs 49s[root@k8s-master-1 1master-Nslave]# kubectl get pvNAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpvc-365be101-2ced-4800-9db0-be68fdedd64d 500Mi RWX Delete Bound redis/redis-data-redis-3 redis-nfs 55spvc-521d77ec-64df-44e1-8a49-22d032046b86 500Mi RWX Delete Bound redis/redis-data-redis-2 redis-nfs 60spvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6 500Mi RWX Delete Bound redis/redis-data-redis-1 redis-nfs 6m19spvc-e0642424-f6b3-405f-9d12-d53b277be042 500Mi RWX Delete Bound redis/redis-data-redis-0 redis-nfs 7m6s# 查看复制信息,可见同步正常[root@k8s-master-1 1master-Nslave]# kubectl exec redis-3 -n redis -- redis-cli -a 123456 info replication# Replicationrole:slavemaster_host:redis-0.redis.redis.svc.cluster.localmaster_port:6379master_link_status:upmaster_last_io_seconds_ago:1master_sync_in_progress:0slave_repl_offset:630slave_priority:100slave_read_only:1connected_slaves:0master_replid:03c3dd18042139cbe116946f6c46c640fb91681bmaster_replid2:0000000000000000000000000000000000000000master_repl_offset:630second_repl_offset:-1repl_backlog_active:1repl_backlog_size:1048576repl_backlog_first_byte_offset:449repl_backlog_histlen:182Warning: Using a password with '-a' or '-u' option on the command line interface may not be safe.缩容

缩容从redis-N->redis-0

# 缩容[root@k8s-master-1 1master-Nslave]# kubectl scale statefulset redis --replicas=1 -n redisstatefulset.apps/redis scaled# 查看pod[root@k8s-master-1 1master-Nslave]# kubectl get pods -n redisNAME READY STATUS RESTARTS AGEnfs-client-provisioner-7f6758d967-l9b5p 1/1 Running 0 11mredis-01/1 Running 0 11m# 查看pv,pvc,可见即使pod被删除了pvc和pv都没有被删除,后续当我们再进行扩容时对应的pod还是会使用之前的pv,[root@k8s-master-1 1master-Nslave]# kubectl get pvNAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpvc-365be101-2ced-4800-9db0-be68fdedd64d 500Mi RWX Delete Bound redis/redis-data-redis-3 redis-nfs 6m29spvc-521d77ec-64df-44e1-8a49-22d032046b86 500Mi RWX Delete Bound redis/redis-data-redis-2 redis-nfs 6m34spvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6 500Mi RWX Delete Bound redis/redis-data-redis-1 redis-nfs 11mpvc-e0642424-f6b3-405f-9d12-d53b277be042 500Mi RWX Delete Bound redis/redis-data-redis-0 redis-nfs 12m[root@k8s-master-1 1master-Nslave]# kubectl get pvc -n redisNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEredis-data-redis-0 Bound pvc-e0642424-f6b3-405f-9d12-d53b277be042 500Mi RWX redis-nfs 12mredis-data-redis-1 Bound pvc-5925df68-31da-41c9-9655-7e9bd4c9bfb6 500Mi RWX redis-nfs 11mredis-data-redis-2 Bound pvc-521d77ec-64df-44e1-8a49-22d032046b86 500Mi RWX redis-nfs 6m39sredis-data-redis-3 Bound pvc-365be101-2ced-4800-9db0-be68fdedd64d 500Mi RWX redis-nfs 6m34s