Stable Diffusion【基础篇】:提示词引导系数(CFG Scale)

大家好,我是极客菌

CFG(Classifier-Free Guidance) 用于控制Stable Diffusion在采样期间应遵循提示词的严格程度。几乎所有稳定扩散 AI 图像生成器都提供了此参数设置。今天我们重点来看看在Stable Diffusion中CFG参数相关内容。

一. CFG是什么

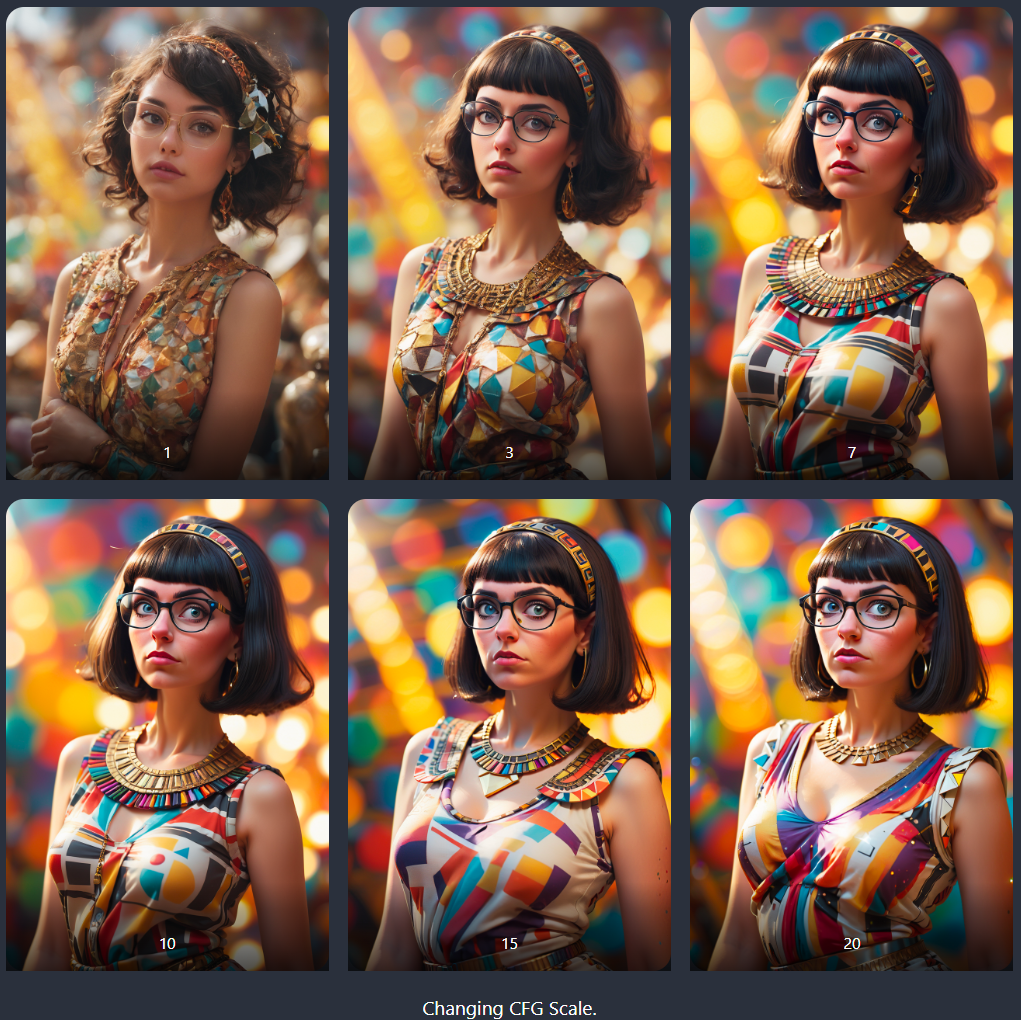

我们先以一个实例来看看CFG在不同参数值时的效果。

breathtaking, cans, geometric patterns, dynamic pose, Eclectic, colorful, and outfit, full body portrait, portrait, close up of a Nerdy Cleopatra, she is embarrassed, surreal, Bokeh, Proud, Bardcore, Lens Flare, painting, pavel, sokov

令人惊叹的、罐子、几何图案、动态姿势、折衷主义、色彩缤纷和服装、全身肖像、肖像、书生气克利奥帕特拉的特写、她很尴尬、超现实、散景、骄傲、Bardcore、镜头光晕、绘画、帕维尔、索科夫

大模型:Protovision XL 高保真 3D

当值为1时,图片几乎不会遵循提示,图片缺乏活力。

当值为3时,图片会出现提示词所描述的样式。

典型值为7时,图像与较大CFG比例的图像相似。

较高的CFG值往往会显示相似的图像,并且颜色变得越来越饱和。

通常将CFG值设置在7到10之间。这样可以让提示词引导图像而不饱和。

二. LCM与SDXL Turbo中CFG参数值

当使用LCM LoRA和SDXL Turbo等快速采样模型时,CFG 参数值要低很多。

LCM LoRA模型中,CFG设置为1-2左右,通常设置为1.5。

SDXL Turbo模型中,CFG设置为1-1.2左右,通常设置为1。

三. CFG原理介绍之CG(Classifier Guidance)

要了解CFG,必须首先了解它的前身:分类器指导(Classifier Guidance)。

分类器引导是一种能够将图像标签信息包含于扩散模型中的方法。你可以使用一个标签来引导扩散过程。举个例子,“猫”这个标签可以引导逆向扩散过程使其生成一张关于猫的图像。

分类器引导强度(classifier guidance scale)是一个用于控制扩散过程应该多贴近于给定标签的一个变量。

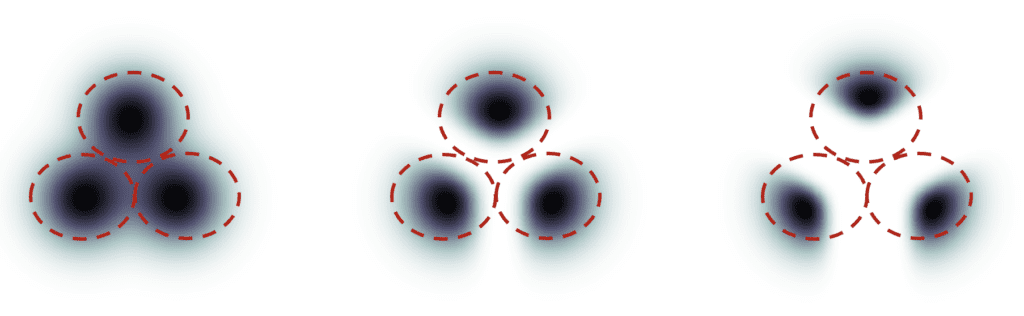

假设有三组图片分别具有”猫“,”狗“以及”人“的标签,如果扩散过程是无引导的,扩散模型在绘图过程中会在这三个组中均匀采样。这会导致它有时候输出的图像会包含两个标签的内容,比如一个男孩牵着一条狗。

分类器引导。左:无引导。中间:低强度引导。右:高强度引导。

在分类器的高强度作用下,扩散模型产生的图像将偏向于某一标签类别中极端或明确的那些示例。如果你要求模型绘制一个猫,它将返回一张确切无疑是猫的图像并且不包含任何其他内容。

分类器引导强度(classifier guidance scale)控制扩散过程与指导目标的贴近程度。在上图中,右边的样本比中间的样本具有更高的分类器引导强度。实际上,这个比例值只是扩散模型计算中的一个漂移变量的乘数。

四. CFG原理介绍

尽管分类器指导为扩散模型带来了突破性的效果提升,但它需要一个额外的模型来提供该指导。这给整个模型的训练带来了一些困难。

无分类器引导(Classifier-free Guidance),用其作者的话来说,是一种可以获得分类器引导结果而不需要分类器的方法。不同于前面所说使用标签和一个单独模型用于引导,他提出使用图像的描述信息并训练一个带条件的扩散模型(conditional diffusion model)。

他们将分类器部分作为噪声预测器 U-Net 的调节,实现了图像生成中所谓的“无分类器”(即没有单独的图像分类器)指导。

在text-to-image功能中,这种引导就由文本指令来提供。

现在我们有了一个可调节的无分类器的扩散过程,我们要如何控制扩散过程遵循指导到什么程度?

无分类器引导强度(CFG Scale)是一个值,它控制文本指令(prompt)对扩散过程的影响程度。当将其设置为 0 时,图像生成是无引导的(即忽略提示),而较高的值会使扩散过程更贴近于文本指令。

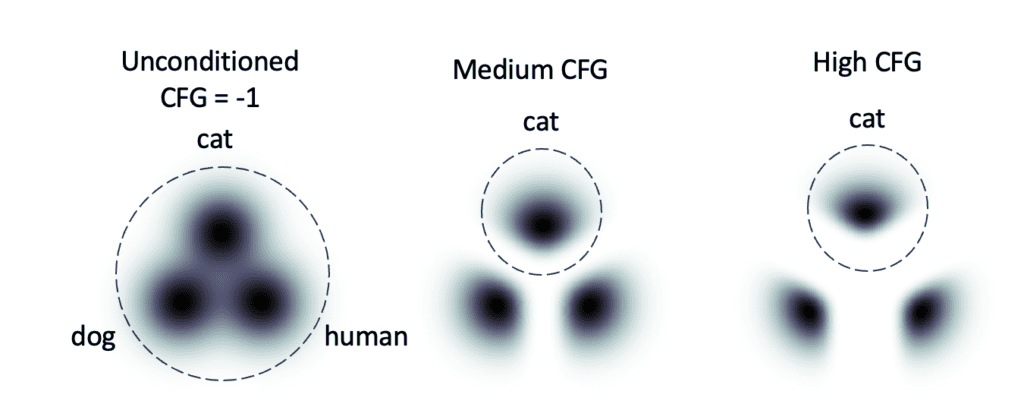

假设有3组图像呈现的三个提示:猫、狗和人。

您输入提示:a cat(一只猫)。

-

如果 CFG Scale设置为 -1,则忽略该提示。你有同等的机会产生一只猫、一只狗和一个人。

-

如果 CFG Scale设置为中等 (7-10),则遵循提示。你总是会生成一只猫。

-

如果CFG Scale设置为高等(大于10以上)可以获得更明确的猫图像

Classifier-free guidance.无分类器指导。

五. 采样迭代步数中使用CFG

现在我们知道CFG是如何工作的了。我们可能想知道最好的CFG值是多少呢。

答案是有合理值 (7-10),但没有最佳值。

CFG Scale设定了准确性和多样性之间的权衡。您可以在高 CFG 值下获得更准确的图像,在低 CFG 值下获得更多样化的图像。

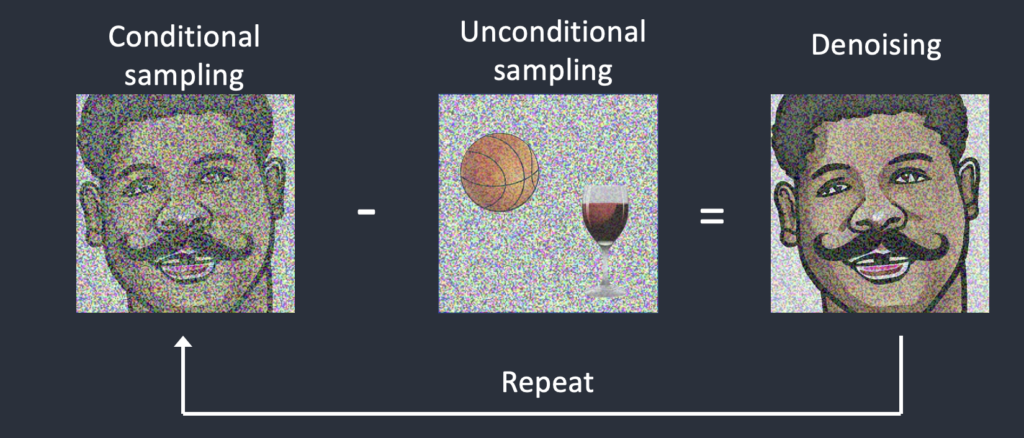

那么我们如何使用CFG Scale呢?答案是它在采样迭代步数中使用。

(1)我们首先从一张随机图像开始。

(2)估计受提示词条件和完全无条件条件的图像的噪声。

(3)图像在条件噪声和非条件噪声之间的方向移动。CFG Scale用于控制步长有多大。

(4)根据噪声表调整图像的噪声。

重复步骤 2 至 4,直到采样步骤结束。

因此,使用CFG时需要两次估计噪声。一种是以文本为条件的,另一种是无条件的。

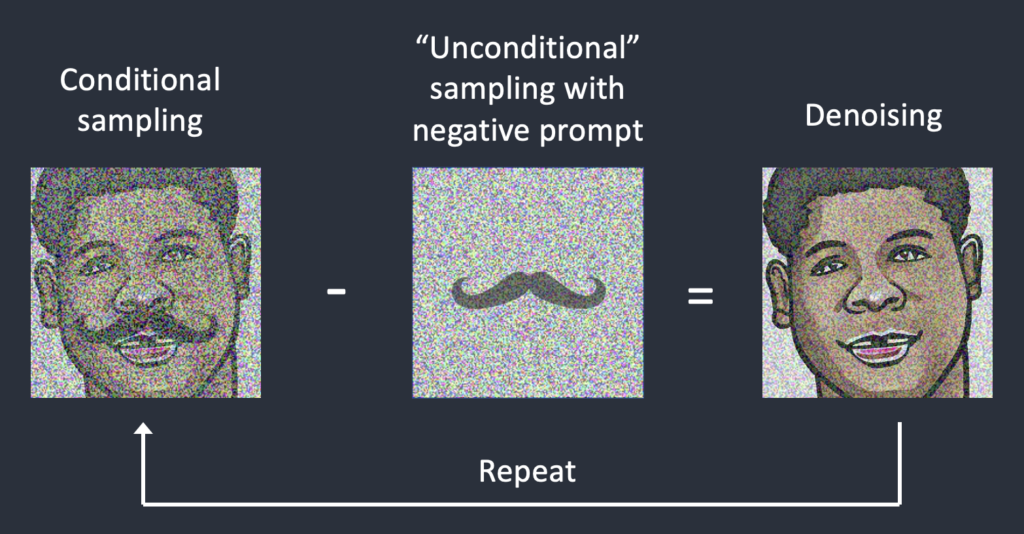

六. 控制CFG使用否定提示词

您可能想知道否定提示词是如何出现的。在训练和采样中,不使用否定提示词!

否定提示词的出现是一种破解:它是通过在采样步骤中用否定提示预测的噪声替换无条件噪声来启用的。

如果没有否定提示词,可以使用空白标记来预测无条件噪声。图像朝着提示词移动,远离随机主体。

当使用否定提示词时,可以用它来预测“无条件”噪声。现在,图像移向提示词并远离负面提示词。

好了,今天的分享就到这里了,希望今天分享的内容对大家有所帮助。

感兴趣的小伙伴,赠送全套AIGC学习资料,包含AI绘画、AI人工智能等前沿科技教程和软件工具,具体看这里。

AIGC技术的未来发展前景广阔,随着人工智能技术的不断发展,AIGC技术也将不断提高。未来,AIGC技术将在游戏和计算领域得到更广泛的应用,使游戏和计算系统具有更高效、更智能、更灵活的特性。同时,AIGC技术也将与人工智能技术紧密结合,在更多的领域得到广泛应用,对程序员来说影响至关重要。未来,AIGC技术将继续得到提高,同时也将与人工智能技术紧密结合,在更多的领域得到广泛应用。

一、AIGC所有方向的学习路线

AIGC所有方向的技术点做的整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照下面的知识点去找对应的学习资源,保证自己学得较为全面。

二、AIGC必备工具

工具都帮大家整理好了,安装就可直接上手!

三、最新AIGC学习笔记

当我学到一定基础,有自己的理解能力的时候,会去阅读一些前辈整理的书籍或者手写的笔记资料,这些笔记详细记载了他们对一些技术点的理解,这些理解是比较独到,可以学到不一样的思路。

四、AIGC视频教程合集

观看全面零基础学习视频,看视频学习是最快捷也是最有效果的方式,跟着视频中老师的思路,从基础到深入,还是很容易入门的。

五、实战案例

纸上得来终觉浅,要学会跟着视频一起敲,要动手实操,才能将自己的所学运用到实际当中去,这时候可以搞点实战案例来学习。